Salesforce’s AI offering has expanded fast in the last two years, and it’s now easy to lose track of which feature does what. There are three layers that matter: Einstein (the AI engine), the Einstein Trust Layer (the safety and privacy controls), and Agentforce (the autonomous agents on top). They sit on each other in that order, and understanding how they relate is the prerequisite for designing anything serious with them.

Salesforce Einstein

In 2016 Salesforce launched the Einstein platform, bringing predictive AI to its clouds. Lead scoring, opportunity insights, case classification, the kind of “here is what the data probably means” features that turned a CRM from a system of record into something that could surface patterns across millions of records.

Almost a decade later, Einstein has grown to include generative AI as well: drafting sales emails, summarising case threads, generating service replies, the kinds of tasks where AI is genuinely better than a stressed human at 5pm on a Friday.

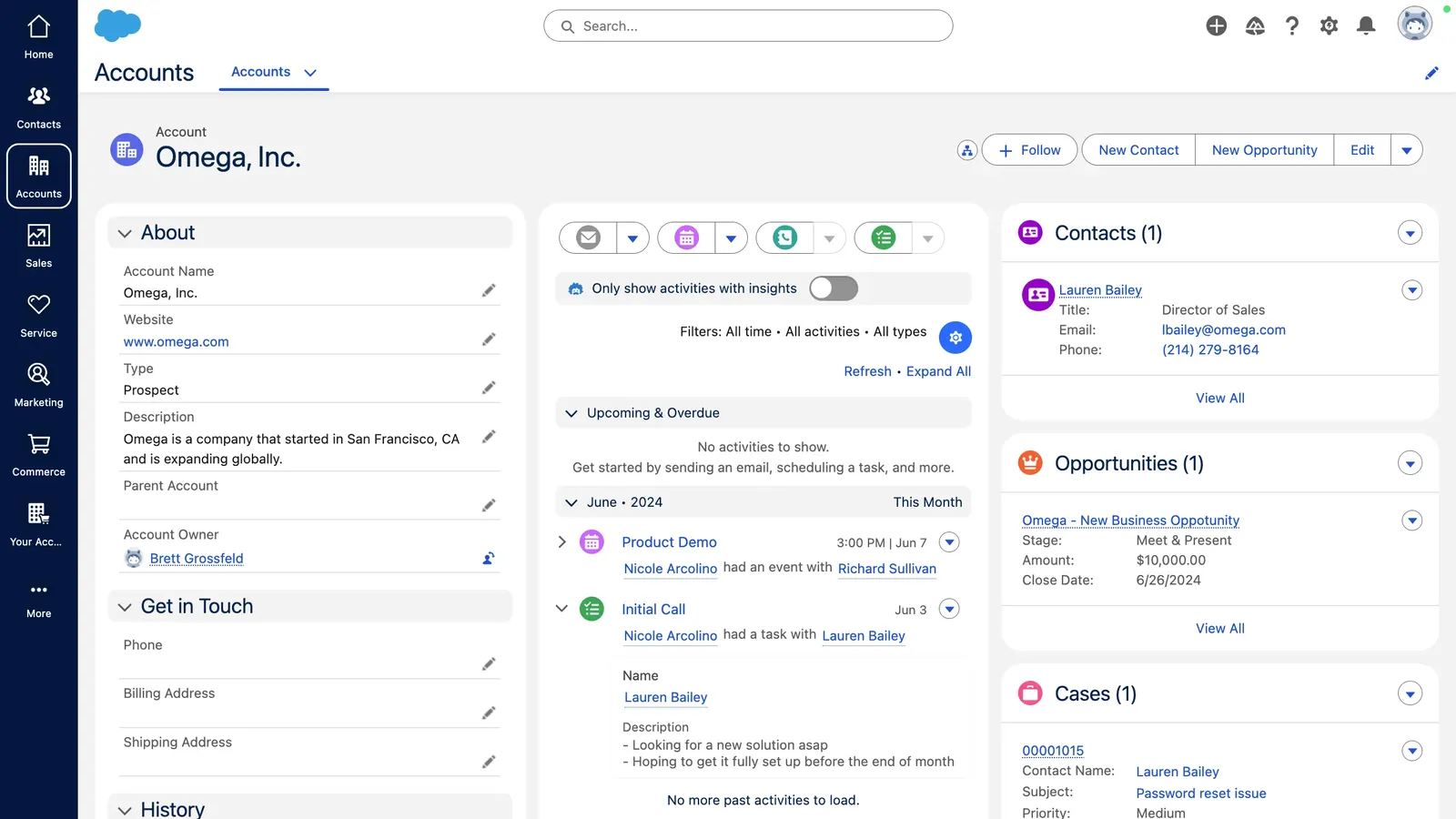

What makes Einstein different from a chat-based AI tool you’d use externally is that it is natively embedded into the Salesforce platform. It draws context from your CRM data and your connected external applications, which means its outputs are grounded in your business reality rather than generic training data. An Einstein-generated email about a specific opportunity knows about that opportunity. A generic LLM doesn’t.

A practical consequence: when an account executive uses Einstein to draft a follow-up after a call, the draft references the actual products discussed, the timeline the prospect mentioned, the named decision-makers in the account. Not because the AE typed it in, but because the platform already knew. That’s the difference between AI that saves you ten minutes and AI that’s worth keeping in the workflow.

The Einstein Trust Layer

The most common, reasonable concern about AI in a CRM is straightforward: how do I use AI features without exposing my company’s data to a third-party model? The Einstein Trust Layer is Salesforce’s answer.

The Trust Layer is a separation between data stored in your CRM and the large language models that power generative features. It’s a set of agreements, security technology, and data and privacy controls designed to keep your company safe while you actually use generative AI.

Practically, it includes:

- Zero-data retention policy. Prompts and responses are not stored or used to train models. The conversation ends when you close the window.

- Dynamic grounding with secure data retrieval. The right data gets attached to a prompt at the right moment, with permission checks applied. A user who can’t see an account in Salesforce can’t get the AI to summarise it for them either.

- Prompt defense. Protections against injection attacks and prompt manipulation.

- Data masking. Sensitive fields (PII, payment info, regulated data) are obscured before leaving the platform. The model sees a placeholder, returns a placeholder, and Salesforce reattaches the real value on the way back.

- Toxicity scoring. Outputs are scored for unsafe or inappropriate content before users see them.

- Audit trail. Every interaction is logged for governance and compliance review.

The Trust Layer is what separates “we plugged ChatGPT into our CRM” (a bad idea) from “we have a platform-native AI surface with controls a regulator can audit” (the answer for any company with real compliance obligations).

In our work, the Trust Layer is also where the most expensive misunderstandings happen. Teams skip the masking config, get six months in, and then realise customer PII has been flowing to an LLM in a way that doesn’t survive a security review. Setting it up correctly the first time is much cheaper than retrofitting it after a breach.

Agentforce

Agentforce is the layer above Einstein. Where Einstein makes a sales rep faster at writing an email, Agentforce takes the rep out of the loop entirely for the right kinds of work. It is a proactive, autonomous AI application that provides specialised, always-on support, either to your employees or directly to your customers.

You equip Agentforce with the business knowledge it needs for its specific role, and it executes against that knowledge, in your data, inside your security model. The agent isn’t a chatbot wrapper around an LLM. It’s a configured operator with permissions, topics, and actions, all governed by the same platform that runs the rest of your Salesforce.

Two scenarios that come up most often:

Resolving customer inquiries. Agentforce engages customers autonomously across channels, 24/7, in natural language, with answers grounded in your business data rather than its imagination. For repetitive support cases (where is my order, how do I reset my password, what’s covered under my warranty) the agent handles the conversation end to end without a human getting involved. For more complex cases, it can hand off cleanly with full context, so the human picks up where the agent left off rather than starting over.

Engaging with prospects. Agentforce autonomously answers product questions, handles common objections, and books meetings for sales reps. It acts and responds accurately because its responses are grounded in your CRM and product data, not the LLM’s training set. The first thirty seconds of a sales conversation, the part that’s mostly disqualifying or qualifying basic fit, can move off the human team’s plate.

Where this stack tends to fail

In our experience, most Agentforce projects that struggle in production share the same two failure modes.

Ungrounded topics. The team configures a topic prompt that says “the agent should help with billing inquiries” and trusts the LLM to figure out what billing means in your system. Without explicit grounding to real Data Cloud DMOs or Salesforce objects, the agent invents plausible-sounding entities. Users see this as the agent making things up. Engineers see it as a missing schema. We wrote about this in detail in Agentforce design without hallucinated context.

Unbounded actions. An action wired to “update the account” with no preconditions, no validation, no logging of why the agent decided to call it. The action works ninety percent of the time. The other ten percent silently corrupts your data, and the audit trail is “the agent did it.” Treat actions like API endpoints, not natural-language conveniences.

What this means for design

The reason this stack matters is that the three layers are not optional or interchangeable. Skipping the Trust Layer to ship faster creates real liability. Skipping Agentforce design rigour and treating it like a chatbot creates the failure modes above.

When the layers are designed properly, Salesforce AI is a real step-change in what a CRM can do for you. When they aren’t, it is a confident hallucination machine wired into your customer relationships. The difference is almost entirely a function of how the layers are designed, not which features get turned on.